Google recently made its most advanced AI model, Gemini 1.5 Pro, available to the general public after releasing it as a developer beta last month.

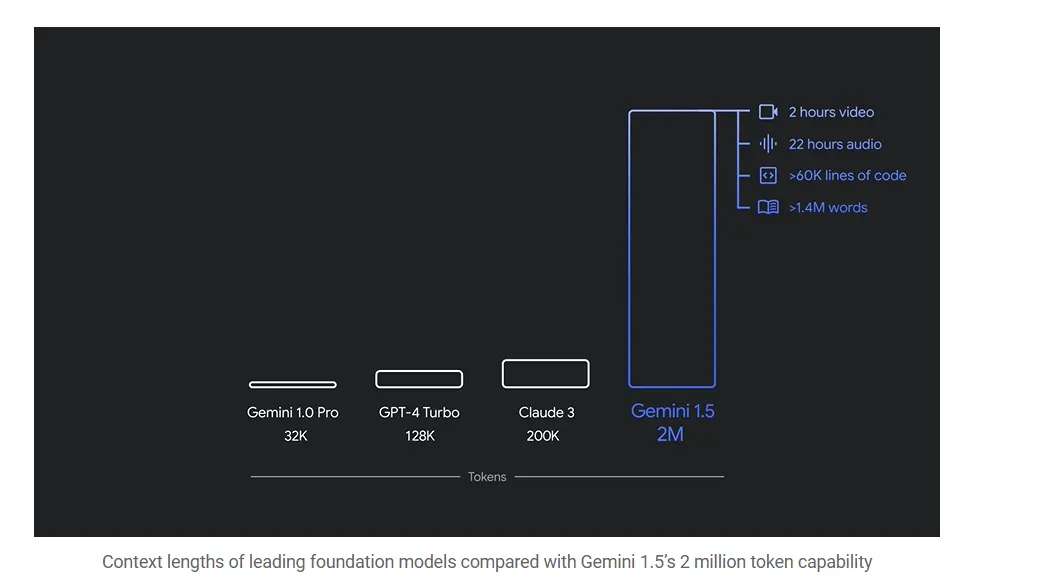

Google’s Gemini 1.5 Pro can perform far more complex tasks than previous AI models. For example, the model can parse entire text libraries, process feature-length Hollywood movies, and sift through nearly an entire day’s worth of audio data. That’s 20 times more data than OpenAI’s GPT-4o can handle and nearly 10 times more information than Anthropic’s Claude 3.5 Sonnet can manage.

According to Google, the goal of Gemini 1.5 Pro is to provide faster and cheaper tools for AI developers. This should enable new use cases, increased production reliability, and higher reliability.

The model debuted in May, with videos of beta testers taking advantage of Gemini 1.5 Pro’s capabilities. For example, machine learning engineer Lukas Atkins fed the model the full Python library and asked questions to solve a problem. “It was perfect,” he said in the video. “It could find specific references to comments in the code and specific requests that people had.”

Another beta tester filmed his entire bookshelf and Gemini created a database of all the books he owned—a task virtually impossible for traditional AI chatbots.

Gemma 2 is taking the open source world by storm

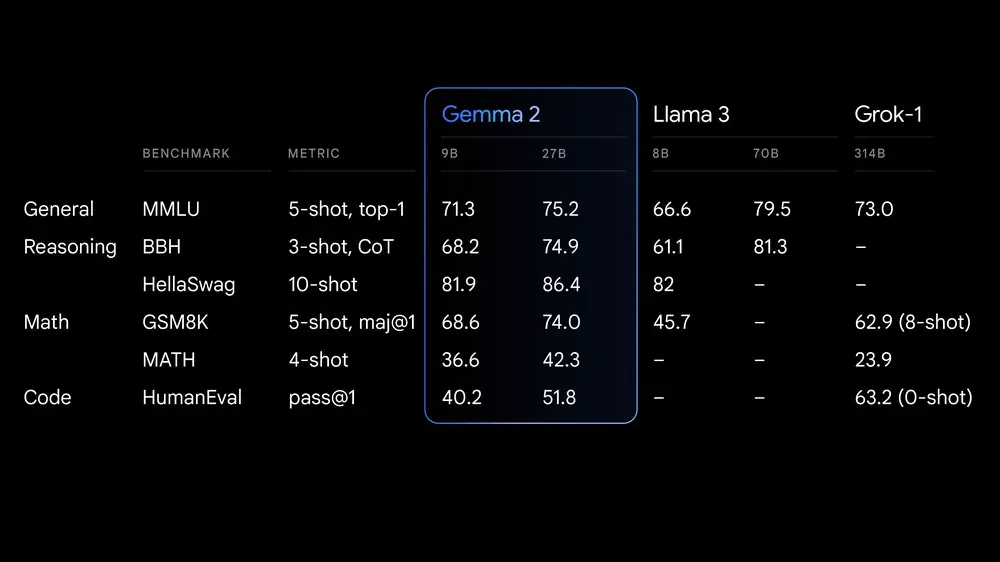

Google has expanded its influence in the open source community by launching Gemma 2 27B today. This open source large language model has quickly taken the top spot as the model with the highest quality comments, according to LLM Arena rankings.

According to Google, Gemma 2 “delivers best-in-class performance, runs at incredible speed on a variety of hardware, and integrates effortlessly with other AI tools.” The model is designed to compete with models “more than twice its size,” the company said.

Although the license for Gemma 2 allows free access and redistribution, it is still different from traditional open-source licenses such as MIT or Apache. The model is available in both 27B and the smaller 9B versions and is intended to make AI implementations more accessible and budget-friendly.

This is important for both regular and enterprise users, because a powerful open model like Gemma is highly customizable, unlike closed models. Users can tune their models to specific tasks and protect their data by running the models locally.

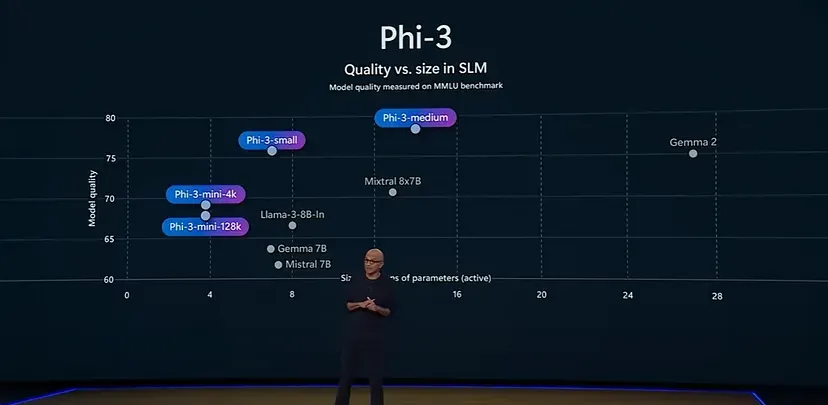

An example of this is Microsoft’s small language model Phi-3, which is specifically tailored to mathematical problems and in that respect can outperform larger models such as Llama-3 and even Gemma 2 itself.

Gemma 2 is now available in Google AI Studio, with model weights available for download from Kaggle and Hugging Face Models. Developers can test the powerful Gemini 1.5 Pro on Vertex AI.

Source: https://cryptobenelux.com/2024/06/30/google-lanceert-krachtigere-ai-versie-gemini-1-5-pro/